Do law firms and CPA firms need an AI usage policy?

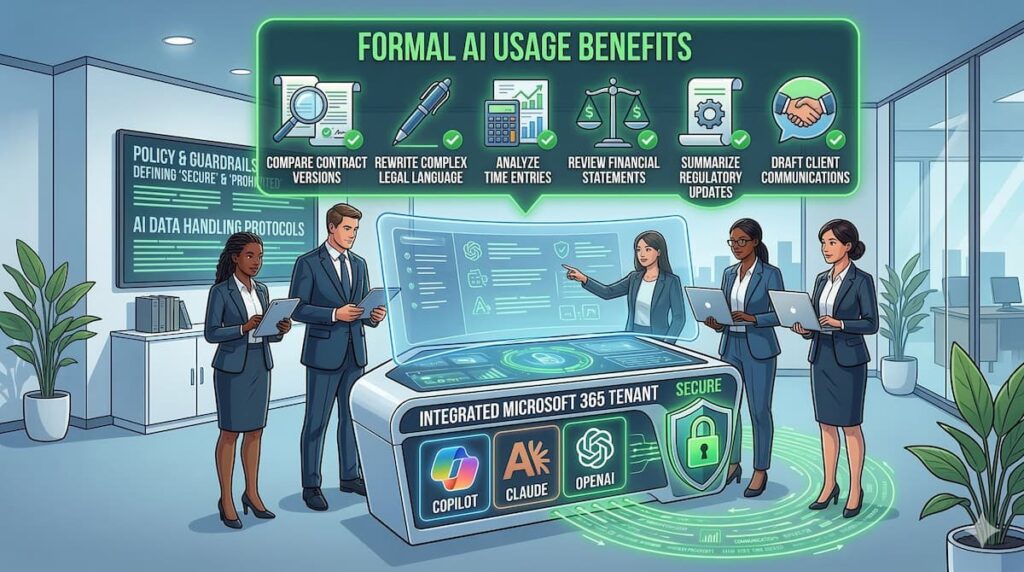

Yes. If your firm handles confidential client data, financial records, tax documents, or privileged communications, you need a formal AI usage policy before allowing tools like Copilot, Claude, or ChatGPT into daily workflows.

Is it safe to use AI for legal or accounting work?

AI can safely support research, contract comparison, document drafting, and financial analysis — but only when deployed in secure, enterprise environments with defined guardrails. Public AI tools should never be used for confidential client material.

What happens if an employee pastes client data into public AI?

It can create confidentiality breaches, ethical violations, regulatory exposure, and reputational damage. In regulated industries, even accidental exposure can have serious consequences.

Why Law Firms and CPA Firms Are Adopting AI — Quietly

Across the country, professional service firms are already using AI behind the scenes.

Attorneys and accountants are leveraging AI to:

- Compare contract versions

- Rewrite complex legal language

- Analyze time entries

- Review financial statements

- Summarize regulatory updates

- Draft client communications

The efficiency gains are significant.

The risk? Most firms are adopting AI faster than they’re governing it.

The Confidentiality Risk No One Is Talking About

Legal and accounting firms operate under strict confidentiality and professional responsibility obligations.

For law firms, that includes:

- Attorney-client privilege

- ABA ethical guidance on technology competence

- State Bar confidentiality standards

For CPA firms, that includes:

- IRS data protection requirements

- FTC Safeguards Rule

- GLBA compliance obligations

- Client financial confidentiality

If an employee pastes client tax data, litigation strategy, or merger documents into a public AI tool, you may have:

- A reportable incident

- A regulatory issue

- A reputational crisis

Professional reputation is built over decades — and can be damaged in a single breach.

What an AI Usage Policy Must Include

An AI usage policy is not a one-page memo. It must be specific, enforceable, and aligned with your regulatory obligations.

Approved vs. Prohibited AI Platforms

Your policy should clearly specify:

- Which tools are approved (for example, Microsoft Copilot within your secured tenant)

- Which tools are prohibited for confidential use

- When, if ever, external AI use is permitted

Clarity eliminates ambiguity.

Data Classification Rules

Define what may never be entered into AI systems, including:

- Client financial statements

- Tax IDs or Social Security numbers

- Pending litigation strategy

- Draft contracts

- M&A documents

- HR files

Clear examples prevent gray areas that create exposure.

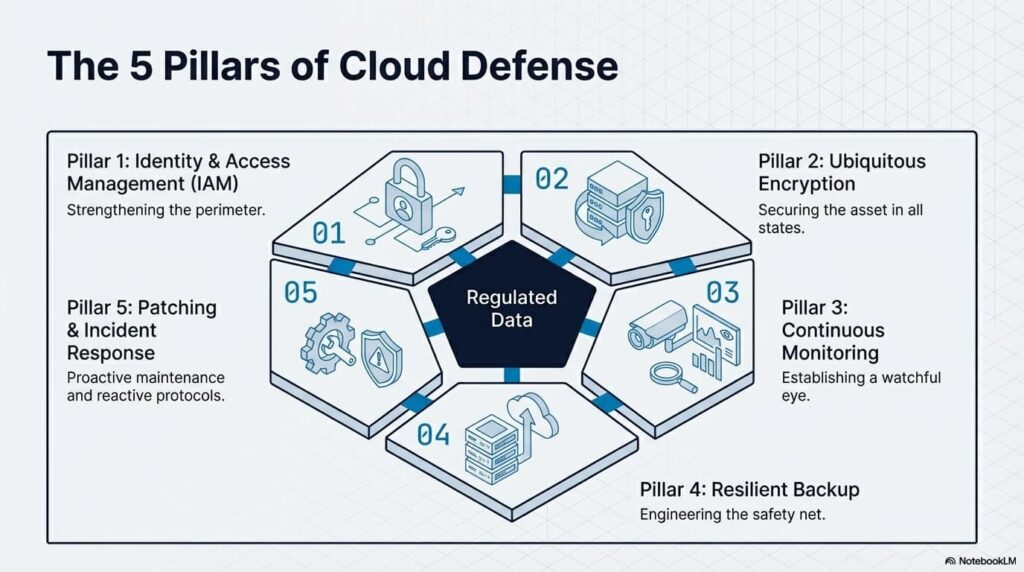

Secure Deployment Requirements

If using Microsoft Copilot, your policy should require:

- Operation inside your Microsoft 365 tenant

- Confirmation that work data is not used for public model training

- Multi-factor authentication for all users

- Audit logging enabled and monitored

AI must be tied to your identity management system — not floating outside it.

Documentation & Monitoring

A strong policy should outline:

- Logging AI usage activity

- Monitoring for anomalies

- Reporting procedures for accidental exposure

- Ongoing compliance reviews

AI governance is continuous — not one-time.

How Law Firms Are Using AI Safely

Contract Version Comparison

Within secure enterprise environments, firms are using AI to:

- Compare redlined agreements

- Highlight clause differences

- Identify missing indemnification language

- Accelerate due diligence

When done inside a controlled tenant, this improves turnaround time without exposing client data.

Legal Drafting & Readability

AI can:

- Simplify legal language for client understanding

- Reformat pleadings

- Generate structured outlines

However, attorneys must review every output. AI is assistive — not authoritative.

Time & Billing Analysis

AI tools can help firms:

- Compare quoted hours to logged hours

- Identify time entry inconsistencies

- Spot workflow inefficiencies

Improved billing oversight strengthens profitability and client transparency.

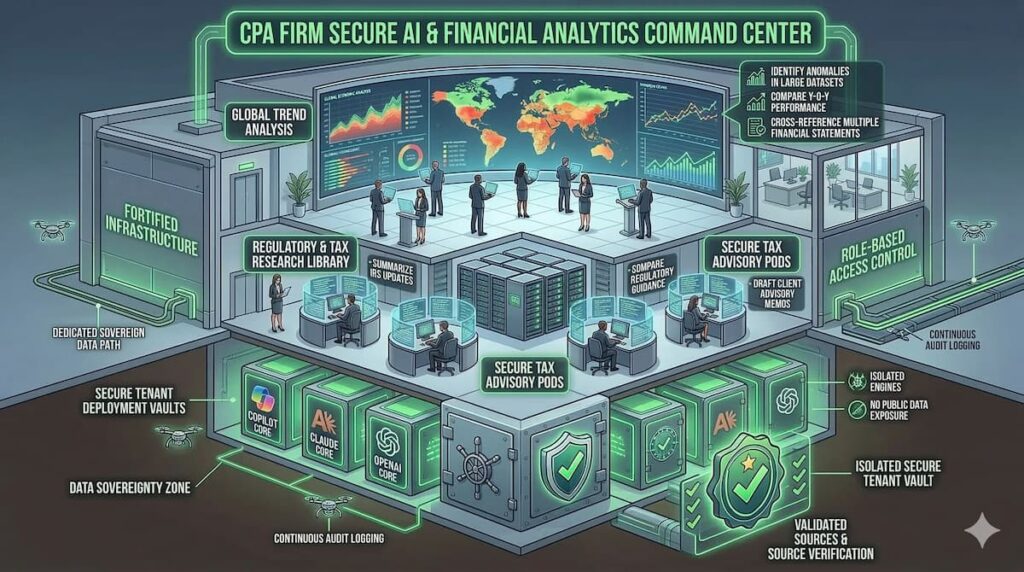

How CPA Firms Are Leveraging AI Securely

Financial Trend Analysis

Within Excel and Copilot, firms can:

- Identify anomalies in large datasets

- Compare year-over-year performance

- Cross-reference multiple financial statements

- Generate executive summaries for clients

This analysis must occur within a secure tenant — not in public AI interfaces.

Regulatory & Tax Research

AI can assist with:

- Summarizing IRS updates

- Comparing regulatory guidance

- Drafting client advisory memos

But source validation is critical. AI responses may sound authoritative while omitting nuance or updates.

Why Microsoft Copilot Is Often the Preferred Foundation

For professional service firms embedded in Microsoft 365, Copilot offers:

- Tenant-level data protection

- No public training on your firm’s data

- Role-based access control

- Administrative oversight

- Integration with Outlook, Teams, Word, and Excel

This creates a controlled AI environment aligned with confidentiality requirements.

Why Choose HD Tech for AI Governance?

HD Tech provides comprehensive managed IT services and cybersecurity for growing businesses nationwide. We are based in Orange County, California, and support law firms and accounting firms across the United States.

Since 1996, we’ve helped professional service organizations:

- Secure Microsoft 365 environments

- Deploy Copilot within controlled tenants

- Develop enforceable AI usage policies

- Implement endpoint protection and monitoring

- Align IT infrastructure with regulatory requirements

We don’t just enable AI tools.

We build guardrails around them.

Frequently Asked Questions About AI in Law & CPA Firms

Can attorneys ethically use AI tools?

Yes, provided they maintain confidentiality, competence, and supervision over AI-generated work. Attorneys remain responsible for the accuracy and privacy of any AI-assisted output.

Is Microsoft Copilot safer than public ChatGPT for client work?

Yes. Copilot operates within your Microsoft tenant and does not use your work data to train public models. Public AI tools should not be used for confidential client information.

Should small firms have an AI policy?

Absolutely. Even small firms handling tax returns or litigation documents face liability if employees use AI without clear guidelines. Size does not reduce regulatory exposure.

How often should an AI policy be reviewed?

At least annually — and whenever new AI tools are introduced. Regulatory expectations and AI capabilities evolve rapidly.

What’s the first step to secure AI adoption?

Conduct an AI risk assessment to identify which tools employees are currently using, what data is being entered, and whether your Microsoft environment is properly configured.

Ready to Implement AI Without Compromising Confidentiality?

AI can streamline research, improve document comparison, and enhance operational efficiency.

But for law firms and CPA firms, confidentiality is non-negotiable.

HD Tech delivers comprehensive managed IT services and cybersecurity for organizations nationwide. Based in Orange County, California, we provide 24/7 monitoring, rapid incident response, secure Microsoft 365 deployments, and AI governance frameworks designed for regulated industries.

Since 1996, we’ve protected over 100 companies — including law firms, accounting practices, and professional service organizations.

If you want to implement AI the right way — with clear policies, secure deployment, and ongoing oversight —

Call HD Tech at 877-540-1684.

Secure your AI strategy before it becomes a liability.

The post Why Law Firms & Accounting Firms Need a Formal AI Usage Policy Before Adopting Copilot or ChatGPT first appeared on HD Tech.

source https://hdtech.com/why-law-firms-accounting-firms-need-a-formal-ai-usage-policy-before-adopting-copilot-or-chatgpt/

No comments:

Post a Comment